The Beauty of Soft Decision Trees

Introduction

Decision trees work by making hard choices at each node: if \(X_1 > 5\), go left; otherwise, go right. It’s clean, interpretable, and brittle. A point sitting right on the decision boundary gets treated exactly the same as one sitting far from it. Small perturbations in the data can flip a split condition and reorganize a large part of the tree.

Soft decision trees replace those binary splits with probability distributions. Instead of routing a data point exclusively to one branch, each node computes a probability of going left versus right. A point near the decision boundary goes roughly 50/50; one far from it gets routed with high confidence. The final prediction is a weighted combination of the leaf predictions, with the weights determined by the routing probabilities.

I find them genuinely interesting — not as a replacement for gradient-boosted trees in most practical settings, but as a design that takes uncertainty more seriously than the standard formulation does. The use of stochastic gradient descent for training also makes them a natural fit for incremental learning, which is the connection I want to explore here.

Uncertainty in Decision Making

Standard decision trees don’t have a notion of confidence at the level of individual splits. The split is either made or not. This can lead to overconfident predictions, particularly near decision boundaries, and it means the model provides no signal about how certain it is in any given region of the feature space.

Soft trees address this directly. Because each path through the tree carries a probability, the final prediction is naturally accompanied by a measure of confidence: a data point that flows through the tree with high probability at every node gets a high-confidence prediction; one that’s always split roughly 50/50 produces a more diffuse prediction. In applications where you need to know how sure the model is — medical diagnosis, financial risk assessment, anything where acting on a wrong high-confidence prediction is expensive — this matters.

Flexibility in Internal Nodes

One of the more interesting aspects of the soft decision tree formulation is that the routing probability at each node doesn’t have to come from a fixed functional form. You can use logistic regression, a small neural network, or any other model that produces a probability. This makes soft trees composable: they’re essentially a tree-shaped mixture model where the mixing weights are learned.

In practice, logistic regression at each node is common — it keeps the model interpretable, each node’s split can be read as a linear decision boundary, and it’s efficient to train with gradient descent. But the generality is there if you need it.

Distillation

One of the more compelling applications of soft decision trees comes from the paper “Distilling a Neural Network Into a Soft Decision Tree” by Nicholas Frosst and Geoffrey Hinton. The motivation is a well-known tension in deep learning: neural networks are accurate but opaque. You get good predictions and no explanation for them.

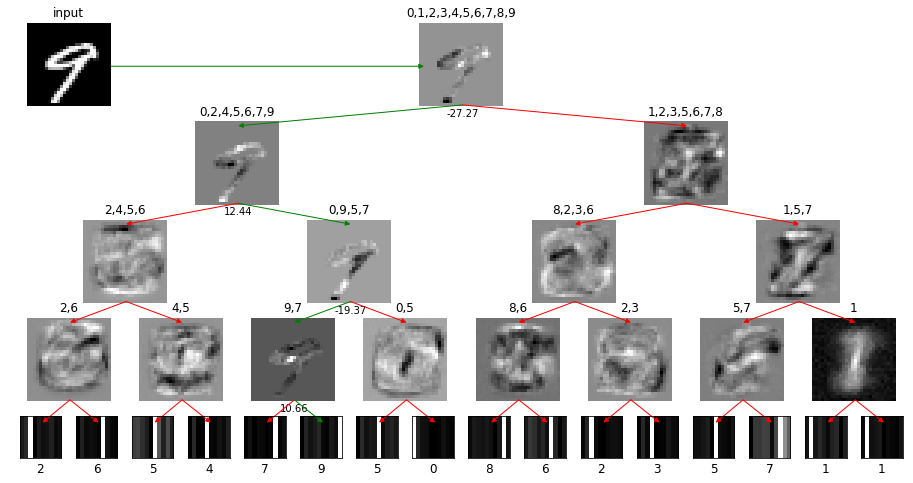

The idea is to train a soft decision tree to mimic a trained neural network, using the network’s output probabilities (soft targets) rather than the hard class labels. This is knowledge distillation applied to the problem of interpretability. The tree learns from the richer signal in the network’s output distribution — which encodes not just the predicted class but also the relative likelihood of other classes — and can learn from a large synthetic dataset generated by the network, not just the original training data.

The result is a model that performs slightly worse than the original network (inevitable, since you’re compressing into a simpler structure) but produces decision paths that are actually readable. You can trace exactly which sequence of learned filters routed a given input to its prediction. On MNIST and Connect4, the soft tree achieves competitive accuracy while providing a transparency that the original network simply can’t.

The broader point is about the interpretability-accuracy tradeoff. Soft decision trees don’t fully close that gap, but the distillation approach is a practical way to get something you can explain without starting from scratch.